There has been some level of worry among writers that we might be the first profession to be replaced by AI since the most recent version of ChatGPT was launched a few weeks ago. Whether that worry is warranted is outside the purview of this post, but suffice it to say that we can now add 3D modellers to the list of potential job losers, as OpenAI has recently unveiled a comparable method that turns text into 3D models.

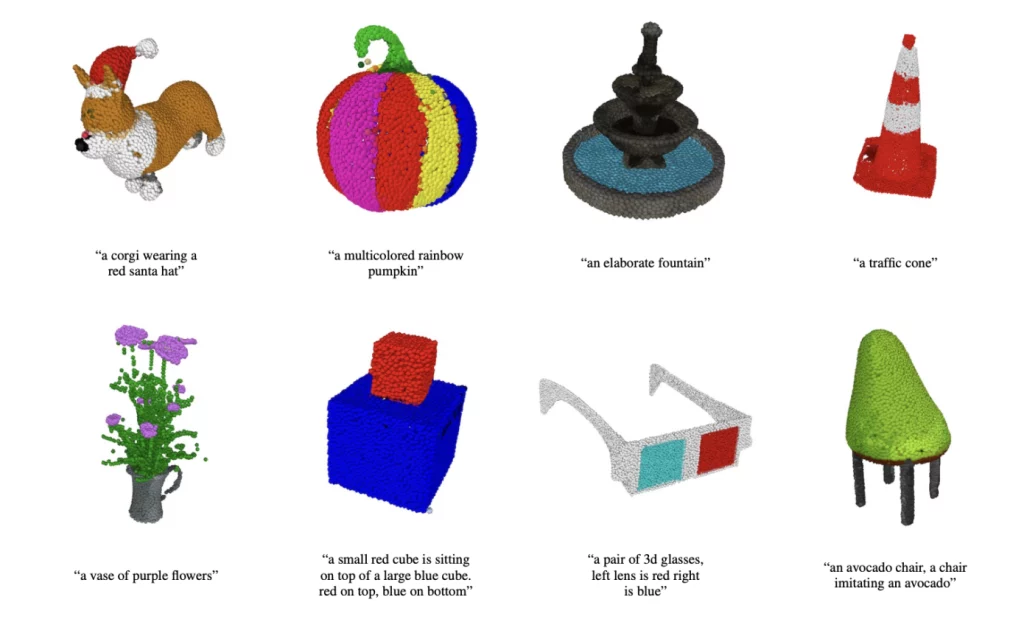

A paper titled “Point-E: A System for Generating 3D Point Clouds from Complex Prompts” has been uploaded to go along with the code, which has been made available on GitHub. Although it is not the first text-to-3D application, it is most likely to receive the most attention over the next weeks. With just a few fundamental Python abilities, anyone may utilise OpenAI’s “Point-E” to generate 3D models from text inputs.

Because it produces point clouds rather than tessellated or surfaced models, Point-E is designated as such. Point-E can produce models far more quickly than other AI text-to-3D techniques and with a small fraction of the necessary computing resources by creating point clouds. On a GPU, Point-E can create a 3D model from your words in just a few minutes.

Since the point clouds are of rather low resolution, the models are indeed quite coarse. But a tessellated surface can be mapped from the dots using the OpenAI point cloud to mesh methodology. The outcome of the mesh is displayed below. Although you can’t print a point cloud, you can design a text-to-3D print workflow, but if you want to use Point-E, you must first turn it into a suitable 3D model for printing.

With the Solidworks ScanTo3D function (or something similar), which is made expressly for transforming point clouds from scanned data into models for reverse engineering purposes, you could certainly do that. It won’t be long before text-to-3D, and ultimately text-to-AM, make the same kind of startling advancements as Dall-E, Stable Diffusion, and ChatGPT.

You can obtain the Point-E model’s source code from Github at this link if you want to experiment with it. Alternatively, you may access the OpenAI published paper here if you just want to read it.

3D APAC Pty Ltd Copyright © 2023. All rights reserved